Building TweetEffect taught me a few lessons and also pointed out some annoyances when building with third party APIs. Above all, I had to re-think and violate some of the best practices I’ve been advocating for years now.

First of all, TweetEffect was meant to be a demo for a university hack day and I didn’t quite plan for it to be a big success. Therefore I cobbled it together rather than planning the whole thing. What I wanted to build was a small tool that shows me my latest Twitter updates and analyze the changes in follower numbers. I then mapped those to the updates that happened before the change to show which ones might have been the cause.

The TweetEffect wishlist

I’ve had a few things I wanted to avoid:

- Users shouldn’t have to give me their Twitter login data – this is just wrong, no matter how you put it

- I didn’t want to cache any data on my server, for the same reason and to avoid my DB getting hammered (this blog runs on the same one :-))

- I wanted end users to be able to use the site or simply get the results with a widget and subsequently with an API.

The PHP solution

Now, the normal way I would go on about building a solution like TweetEffect is to build it in PHP and then enhance it with JavaScript. This means it will work for everybody – including me on my BlackBerry – and I have PHP at my disposal, which is much richer than JavaScript when it comes to XML conversion or even array handling.

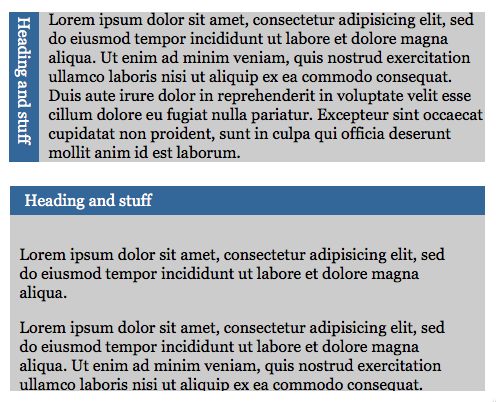

The normal way of dealing with it would be something like this:

The problem I encountered with this even whilst developing is that if you call a third party API in your API you can quickly run against its limits and get blocked for an hour.

The only workaround is to cache the results locally – something I wanted to avoid for accuracy and the sanity of my server. Other services do caching for you (like gnip) but then you also run into the issue of data being outdated. During development it is a good idea to have a local flat data file stored to use – this will also cut down on your development time as you never have to wait for the third party servers.

Crowdsourcing API calls to avoid reaching the limit

Normally progressive enhancement in this case could be used to override the form submit event to show a slicker interface and do sorting of the data once it has been loaded without re-reading the page. This would cut down on the number of times you accessed the third party API.

However, if the API is more restrictive (like Twitter) but has a JSON output you can work around the issue by not calling the API server-side but instead create script nodes dynamically to get the data. That way you’re not the one requesting it but the computers of your users are doing it for you. Exceeding the API limit can only be done by your end users individually, not by all of them together. The obvious drawback is that users without JavaScript don’t get any results.

In the case of using dynamic script nodesthe api.php file still does the user entry sanitization, but instead of contacting the third party API and writing out the data directly, it writes out an HTML scaffolding and the necessary JavaScript files.

This, however is not progressive enhancement as it does not test if JavaScript is available – instead it simply expects it to work. We could work around that by adding a hidden form field that gets populated with JavaScript or simply by giving the submit button a name attribute when JavaScript is available.

In any case, the solution will never be proper progressive enhancement as you will have to maintain two versions: the one that builds the resulting interface in JavaScript, and another one that does it server-side. The server side solution will most likely keel over sooner or later and you cannot offer a simple URL interface like app.php?user=user_name as this will always lead to the server side solution instead of the JavaScript one.

Submission method switching

The way around that is to change the method of the form when JavaScript is available. Initially you set the form to POST and you change it to GET if JavaScript is turned on. You can then check in the API for POST or GET submission and react accordingly:

- If there is a GET parameter use the JavaScript solution

- If POST was used then the form was submitted without JavaScript and you offer the server-side solution.

This means that people without JavaScript cannot use the REST API of your application, but still can enter the data in the form and send this one off. You will hit the rate limit in this case sooner or later, but seeing that most users will have JavaScript available it is quite a safe bet that it’ll be a rare occasion.

You can see the result in the demo and download the demo files as a zip. Try the demo (any user name works, this is a hard-coded API, not live Twitter data) with and without JavaScript to see the difference.

Summary

All in all strict rate-limiting is a real pain for web application developers (or hackers for that matter). The reasons are of course obvious, and this workaround does the job for now. It is however not quite right and does make it harder for users without JavaScript. The other issue of course is that the security aspect of using JSON in generated script nodes without validation can become a problem.

In the end it boils down to what your API should be used for and to maintain a good communication with your API users. If your product by definition is meant for short-term-high-traffic viral solutions then the ball is in your court to keep it scalable.