Witnessing the death of the web as a news medium

Monday, June 3rd, 2024As some of you may know, I started out as a radio journalist. And when I discovered the web in around 1996, I knew that, to me, radio and TV were not the dominant news media any longer. Nowhere but on the web was it possible to research and cross-reference from dozens or resources with various origins. You could directly access the press agencies for news without having to read the politically or sensationalist tainted derivates in various outlets.

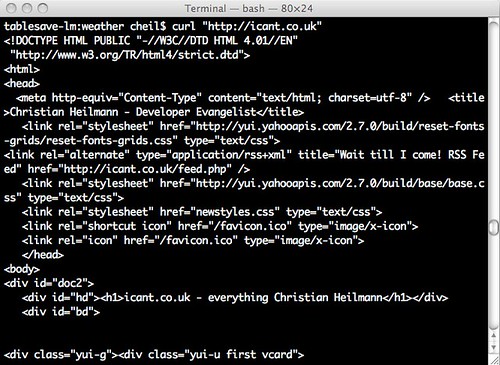

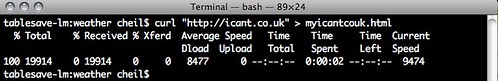

The amazing thing was the humble link. And as the cool kids said back then Cool URIs don’t change. The powers of the web were:

- being able to link to other resources,

- remixing, and

- bookmarking for later use.

In other words, the web was about retention and accumulation of content. An ever growing library that by its very nature was self-indexing and cross-referencing. And this is what is being actively killed these days. But let’s go back a bit before I start focusing on that problem. Let’s take a peek at the slow decline of the web as a news medium.

Coffin Nail 1: Publishing for “free” comes at a price

The great thing about the web was that everyone could become a publisher and let their voice be heard. Finding places to write and create web pages was easy. But many of them were also short-lived and we learned the hard way when – for example – Geocities shut down, “free” didn’t mean “yours forever online”.

Coffin Nail 2: Moving to tagging and commenting

When “web2.0” became a thing, the publishing model got turned on its head. Instead of writing in an own publication, the idea was to comment and do smaller posts on a topic, linking to resources, or adding a funny image without alternative text. Accumulatively adding to threads, so to say. A bit of a reminder of Bulletin Boards or Forums, but with less focus.

At that time I worked on various social media ideas in Yahoo, hitherto one of the main sources for people’s daily news, replacing daily papers. The model of Yahoo and others back then was simple: buy news content, spruce it up a bit and show ads around it.

Even then some dark patterns evolved, like splitting up longer content into carousels and pagination not for the sake of the user, but to record yet another click. Clicks and interaction means ad displays, reading was kind of a necessary evil from a monetisation point of view. This is also when the first ideas of creating sticky, viral and – let’s call it by its real name – addictive and lock-in content came up. Something we perfected now, but still wanted to avoid back then.

Pulling Nails: approaching web 2.0 ethically

Back then “web 2.0” or user generated content was something we didn’t quite trust and the biggest no-no was to create a product for a community for the sake of having one. This anti-pattern was called the Potemkin Villages, when historically people build fake villages for the emperor to see when driving past so he’d see growth where there wasn’t any.

So, instead of growing a community, you build an empty product. Without filling that one already with some content, this was a non-starter. People are happy to comment and add to something that already exists. Only a few are real content creators, and those were more likely to have an own blog.

Our ideas for creating social media products were simple:

- We wanted to encourage human created answers and not machines spurting out data.

- We wanted to encourage people to write high quality content and reward them for it.

- We wanted to allow for human questions and dabbled with natural language processing.

And we found two important facts. People are much more likely to create content when you either:

- start with an existing community and give them a space online or

- when you put something at the centre of the social platform that people care about on an emotional level.

Facebook expanded on already existing university groups. LinkedIn and its European equivalent Xing was about finding a job and telling people where you work, so it was convenience rather than an emotional bond.

The “new” factor was also a big one. Delicous, for example, was thriving, with people bookmarking, describing and tagging resources and sharing them with friends. Yahoo Bookmarks did a similar thing, but without a focus on the social aspect. It also sported the already dusty Yahoo brand, which didn’t attract the cool, new content creators.

Flickr was about Photos, something people care about deeply. Upcoming was about events, Ravelry was about knitting, Dopplr was about sharing your travel plans with friends. Things were interesting and the good will and effort the community put into tagging, cleaning up and categorising content for others was fun to see. All was a validation of our assumptions of the emotional core of a good social network.

One big thing that was also part of this was that every product had a data API, that allowed you to create Mashups with the information and empowered techies to find new use cases for it. I even wrote a book about this with a colleague that took off like a lead balloon – but that’s another story.

We lost spectacularly with that approach. As it was about the people, not about the quick success and the money it made.

Then came the times of micro blogging, with Tumblr leading the charge, but also MySpace, Bandcamp and many more. Still, there was a semblance of something emotional at their core and people used these systems as their virtual homes and identity. But, there was already a “fire and forget” mentality that came with it. People didn’t expect these things to have an edit history, and they kept getting changed. Maybe people were burned by Geocities’ demise, but one thing these places on the web were not – lasting.

Coffin Nail 3: Time-sensitive content

Another thing that soon became apparent is that a lot of content became time sensitive – or, well – created with a defined expiry date in mind.

This has always been the case in the creative arts. When Web Design started to be a thing, getting to design a web site for a movie or a festival was a carte blanche to go wild and push the limits of the platform. You knew that the product had a fixed life span, and nobody will give a monkey’s in a month time.

It got trickier when news outlets did the same. I remember when the Guardian and the BBC had full access to the archives. I even remember when other newspapers and news aggregator content was available to remix. But soon any news content past 30 days was deleted from the web and you had to rely on Google Cache or The Internet Archive’s WayBackMachine to quote content made a month ago.

Publishers started realising that throwing out more and short-lived, dramatic content is how you get the clicks. And this is what it was all about.

Coffin Nail 4: Search Engine Optimisation

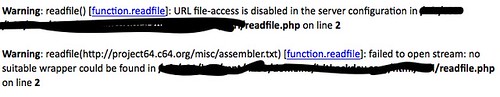

The next deep cut to the web as a publication medium was search engine optimisation. Sites stopped linking to other sites, and instead started to link to their own, search engine optimised archive and overview pages to keep the users in the system.

I’ve always hated that. “Politician X did this which is related to Y” with Y being a keyword linked to an older publication on the same platform. This is not a citation or verification – it is a waste of my time.

As a content creator with old, well indexed content you keep getting offers to add links to boost, frankly, content-less pages that are ads or products. I get about 20 of them a week, some praising my “great content” and quoting an archive page like https://christianheilmann.com/2005/04/. It’s insulting and a waste of time.

Real black hat SEO went further with generating link farms and SEO optimised blogs and fake sites all linking to another. It was the first indication of the journey towards content being created for bots and crawlers rather than for humans. All of this was done as the only monetisation model of the web that really worked and brought big money was ads. And this lead to the next problem.

Coffin Nail 5: The Ad blocker arms race

Meanwhile, content sites do cost money, so you need to get it from somewhere. Subscription models are tricky and don’t really translate from printed newspapers to online. So, publishers did what they knew – they displayed ads. First subtly, then almost unbearably so.

Having a blaring, auto-playing video advertising an SUV is not as sleazy as a popup on more, uhm, exotic content sites about male enhancement products, but its technically the same thing. Other ads and platforms like Facebook were even more intrusive and followed you around the web. People adding an official “Share to Facebook” button to their sites means you are being spied on.

This lead privacy and security advocates to build add-ons to browsers that would remove intrusive ads and third party includes.

The practical upshot of that for everyone was that ads were removed from the pages and all the annoyances were gone. And you could even claim that you used ad blockers to protect your privacy, and not because you want to have everything for free. The users won.

But the market has a penchant for fighting back.

Ads became even more intrusive, included into media like images and videos. Many sites tried an adorable approach to detect ad blockers and tell people to please not use them. Others made their products dependent on JavaScript delivered from the same CDNs as ads, thus breaking the experience and adding overhead and making things less resilient for every user.

Things cost money, and instead of trying to find better ways to make people pay for content online that they think it is worth, the market did something much, much worse.

Coffin Nail 6: The death of web search

Search is big money, browsers are expensive and loss-leaders. Chrome exists to advertise Google content and send you to Google search. Microsoft Edge exists to give you MSN and Bing content.

When these services don’t make money as people use ad blockers, every commercial search engine showed you lots of ads disguised as search results or similar products to the thing you might have looked for.

I wrote about that some time ago, in the The web starts on page four essay (yes, I am linking to my blog. No, it is not ironic, and will throw 10,000 spoons at anyone who claims so).

This got even worse. I like to research things I want to buy. So, if I add a product name with a size and a code like “Fred Perry Polo M3600 Black L”, I do not want a Lacoste Polo, no matter how much money they pay your search engine.

Web search has become a shopping mall rather than returning links from the web. There is a URL hack you can use in Google to only get Web results but I won’t be surprised if that went away soon.

Fact is that indexing has become less important. 38% of webpages that existed in 2013 are no longer accessible now. Longevity isn’t a goal anymore it seems.

Coffin Nail 7: Social media optimisation

Then came the big area of social media. A misnomer, as there isn’t much social about it. Twitter, Facebook, and others have had a social aspect, for sure, but once they became mainstream media contenders, they soon became weaponised. First, to sell lots of stuff (remember that Facebook is also Instagram, WhatsApp…), and second to change people’s opinions. The Cambridge Analytica kerfuffle showed that by having an addiction machine and keeping people in their bubble you can do much more than the Nazis ever could do with giving people affordable radio sets.

The immediacy and ephemeral nature of social media these days is the equivalent of virtual cocaine. It’s fast, it promises glamour and people get dependent on it without realising it as it all makes so much sense to them. Many studies show that the more outrageous, borderline illegal content gets, the more people interact with it. Even when they are disgusted about it.

For an interesting example, take a look at the viewing numbers of pimple squeezing videos on YouTube, which do exceed fetishists’ consumption by far. And these are the least distasteful things that make up short lived successes. We had a period like that on the earlier Internet, too, with sites like Stileproject and Ogrish leading the charge.

Once search engines returned Twitter posts in favour of web pages or news content, we went down a slippery slope. And lately this has moved into utterly manipulative territory.

Almost every social platform now ranks posts with links lower than posts that are just statements. Posts that put that statement in an image (often without any alt text) rank even higher. It’s a middle finger to the web we thought about creating. The global read and write library.

Personal opinion and shock factor trumps statements with links to verify them. Welcome to a pub full of drunk folk spouting half arsed knowledge and getting their mates to gang up on you when you try to point out obvious flaws in the statement.

Moving to disposable content

The problem with immediacy and going for more atomic content creation is that there is no track record. My blog has been indexed and spread far and wide since I started it in 2005. Whatever I put on Twitter over the years is either hard to find or lost. And this is not something the market laments. Instead, it is seen as a thing a new generation of users crave and want. Is that demand manufactured? Are we controlling a new generation of people by shoving them into a perfect addiction machine like TikTok for the sake of keeping them occupied? Or is this really where media goes?

Machine generated, optimised, boring and immediately forgotten

ChatGPT was a roaring success and people are scared of missing the boat so everything gets “AI” shoved into it right now. Google messed up badly with indexing content from Reddit, a platform with a history of fun “Wrong answers only” posts. Creating summaries that sound excellent and inviting us to keep chatting with a bot full of nonsense. They now say they fixed it by favouring less funny and viral content. Facebook started doing the same, and so does Bing.

When did you ever get a single answer from a human that made you happy without further questions? AI powered summaries are like hitting “I feel lucky” back in the day on Google. Even back then we overestimated the quality of the algorithm. And soon SEO players took that on to get their results as the first – no matter their validity.

We face a web right now that is machine generated content for bots to consume and throw us humans tidbits that sound solid, but are based on decades of random content added to the web to answer a quick “how”, but not the “why” behind it. It pains me to see the opportunity that was the web squandered like this.

There are counter movements and nobody can stop you from publishing long form, great content. And maybe that’s reward in itself. I feel better for having this written down. And I don’t care if it will go viral, quoted by people cleverer than me, or get media fame.

But I had the power to throw it out. A power the web gives everyone. For now. So let’s think how we can make that remain an option.