Yesterday, I was asked by the Berlin chapter of Women Techmakers to give a talk at the graduation ceremony of their JavaScript Crash Course. I wanted to give a talk about the next steps you can take now you learned the basics of JavaScript in 2017 instead of repeating old ideas. This is what I came up with.

First of all, congratulations – you chose wisely. JavaScript has evolved from being a “toy language”, “real programmers” laughed at into the language that powers the web and beyond.

For better or worse. JavaScript isn’t a perfect language- if something like that even exists. But it has a few things that speak for it.

JavaScript is everywhere

First of all, it is by now ubiquituous and you can create a lot of amazing things with it.

- You can use it in a browser environment to make web “things” that are highly interactive. These could be web sites that respond better and are more engaging when Javacript works. They could also be apps that users install and don’t even need to know that you used HTML. CSS and JavaScript to build them. They could even be complex games and VR experiences.

- You can also go server-side with Node. Then you can use JavaScript to build APIs, services and even full-fledged servers. You can create build processes and automate a lot of boring tasks that in the past needed a server to run on.

- You can use abstraction frameworks to publish on the web and create binary formats for iOS, Android and others. By starting with JavaScript, you can convert into many other things – something that isn’t sensibly possible the other way around.

- Or you can go completely wild and build robots that get their instructions in JavaScript. You can automate operating systems. You can write extensions for browsers and you can script other applications.

- You can publish to and take advantage of NPM, a vast resource of pre-build solutions you can mix and match to build your own – more complex – solutions. This is tempting, but there is also a danger of using too much and using things you don’t understand. So, whilst we’re in a package world with JavaScript, it makes sense to remember what JavaScript is and start there.

Mastering JavaScript isn’t easy, but getting started is simpler than with other languages. You are not dependent on a certain editor and you don’t need to compile it to create something that can run. Most important of all – you don’t need to spend any money to get started. Browsers are free. A lot of editors are free and many even open source. Above all, documentation is online and available to you.

You know the best thing though? I envy you!

I’ve been working with JavaScript more or less since it has been around and I’ve been carrying a lot of ballast with me.

There is a running joke where you can see the “Definitive Guide to JavaScript” book next to Douglas Crockford’s “JavaScript – the good parts”. The latter is much, much smaller. This doesn’t mean that JavaScript is bad. It means that the versatility of JavaScript can be its own undoing. JavaScript runs everywhere. Over the years terrible environments left a track record of awful APIs and methods. The standardisation process of JavaScript has been a roller-coaster ride. The Definitive Guide explains that. It is a compendium of all that happened – good and “dear me, why would you even consider doing this in JavaScript?”. The Good Parts book sticks to the syntax and how to write pure JavaScript. Think of The Guide as the whole back catalogue of a band with all their sins of the past. Whereas “The Good Parts” is their hit single.

Starting with a much cleaner slate

And here is where you come in. I don’t even want you to think about the good parts of the language itself. That can come later – if deep-diving on a language’s syntax and constructs tickles your fancy. Right now, I envy you because you have a chance to hit the ground running. And I want you, as a newcomer, to not repeat the mistakes we did to get where we are.

So next up are a few things I want to introduce to you that you can use to take the next step as a JavaScript developer. These things can help you become more effective in what you do and also help you to take part and help the community. You’re very welcome to disregard this advice. You’re also welcome to challenge it – after all, this is what new voices are about. With the speed JavaScript evolves some things I tell you now will become obsolete, for sure. But I for one am excited about these things and I am sure had I had them when my career started, I’d be much further than I am now.

First of all, where can you go to learn about JavaScript?

Unsurprisingly, this is a tricky one. JavaScript is a hot topic and a lot of people get the job to write some without wanting to understand it. This means there are terrible, spammy resources telling you the “simple” way to do something in JavaScript. Do yourself a favour and don’t fall for that siren song. Also be vigilant about “here is the quick solution” answers on the web. Often these are biased solutions that keep getting repeated to reach a quick result. If something sounds too good to be true, often that is exactly what it is.

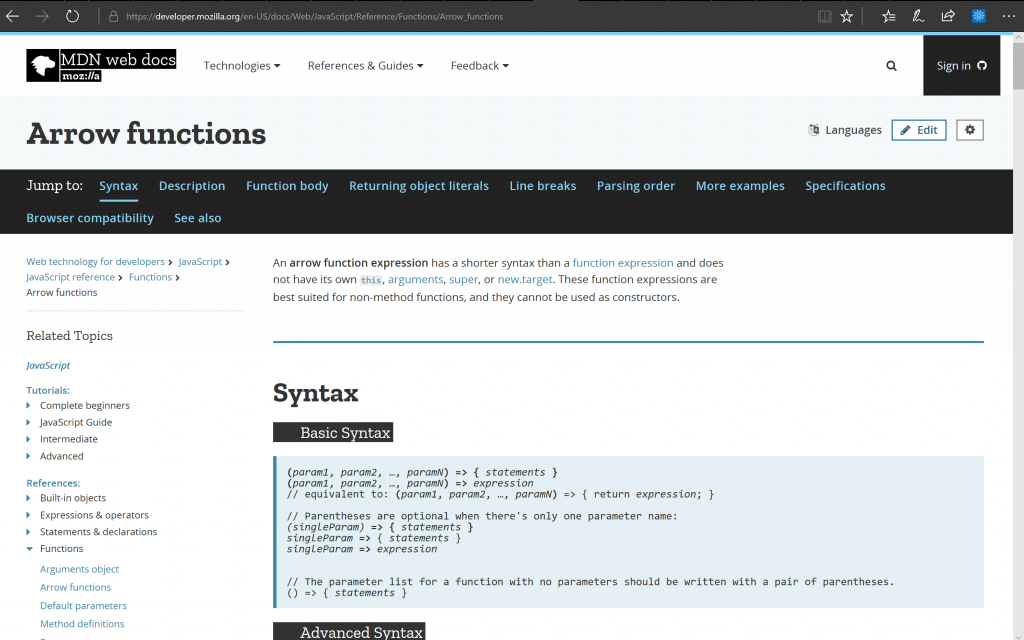

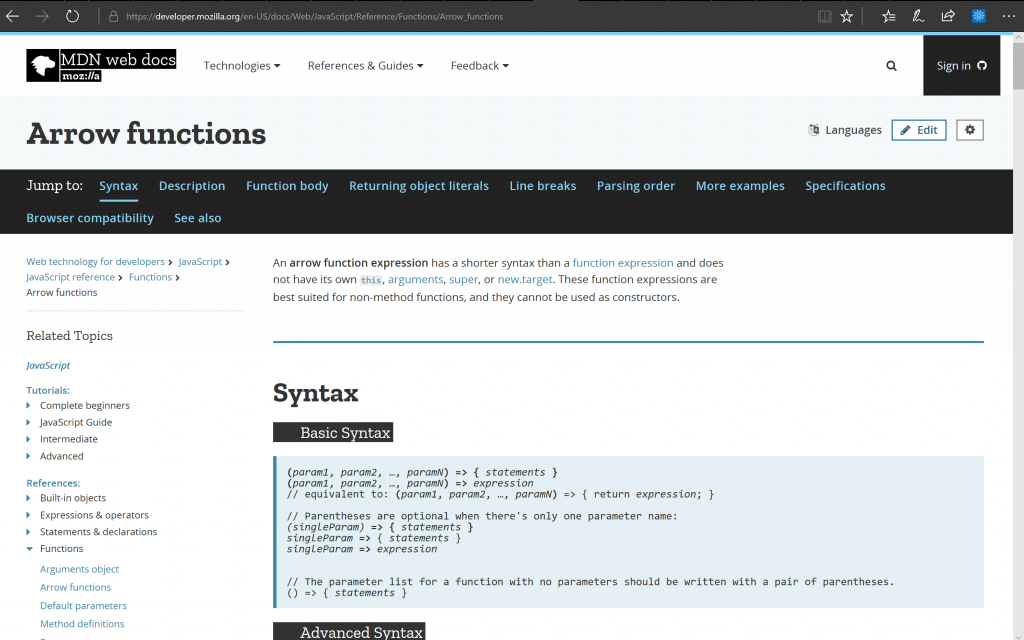

This is where the MDN Web Docs is my weapon of choice. It is a unbiased, open, very well maintained resource. It is not a Mozilla only thing, but many other players add content and help keeping it up-to-date. It is even writable – you can do edits when you find something is wrong.

The great thing about the Web Docs is that it isn’t “just” documentation. It also has code examples and detailed browser support tables for all the things it talks about. Many resources that tried to document the open web came and went. MDN prevailed.

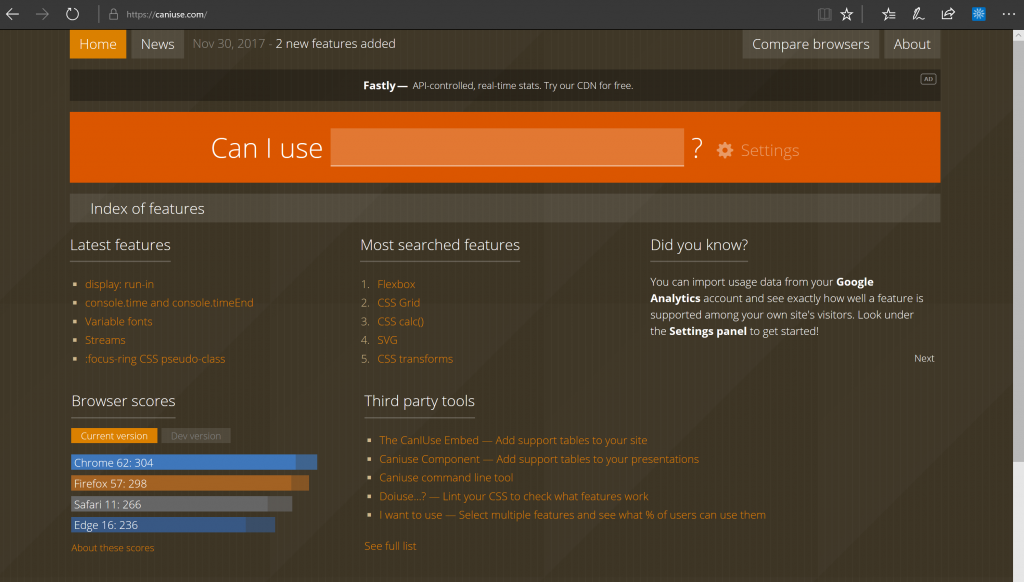

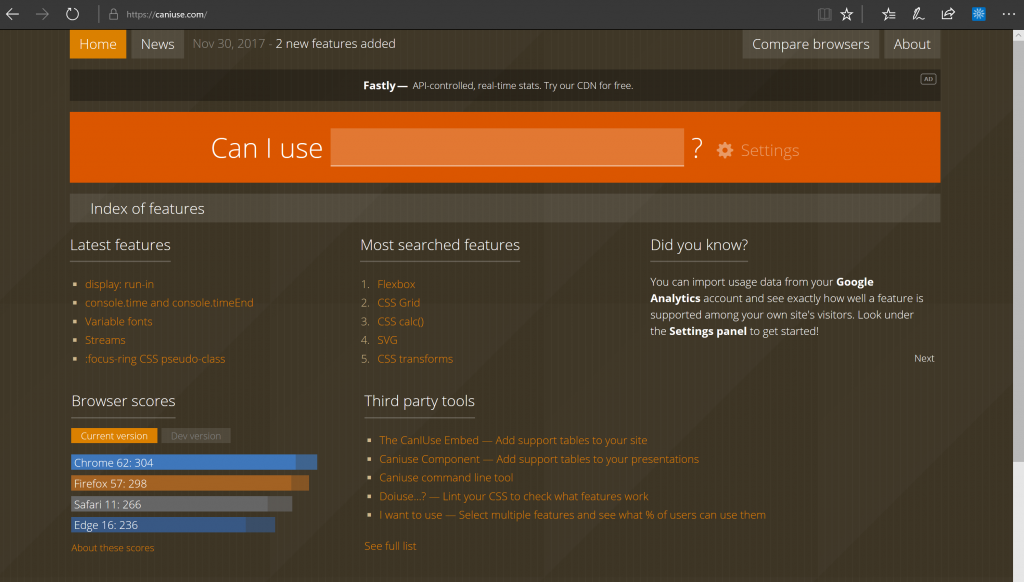

Talking about browser support, Can I use is a well-maintained resource. It doesn’t only show detailed browser support. It also links to the standard documents telling you what should happen. And it shows the quirks and problems in various versions with possible workarounds.

Browsers are much less of a problem

Talking about browsers, it is a wonderful time in that regard. We’ve had a tricky ride there. In the past, browsers were a black hole and we had no idea what magic made them work. Until browsers committed to following standards we had to know about their ideas how things should work. In essence, our career was dependent on knowing how browsers mess up. That’s easy to do, but in the long run not fulfilling. Nowadays browsers take a much more leading role in defining and inspiring standards. Browsers themselves strive to be evergreen. Non-standardised functionality is behind flags or shipped in “developer editions” or nightly builds.

The best thing is that browsers makers are available for feedback and invite you to file bugs. This has been a massive change and something we as developers should cherish. There is a lot less of “this is how it is, not much we can do” and a lot more “well, that doesn’t work, can we get this fixed”.

Browser makers also understand that developers matter. Furthermore, that a web developer is as much an engineer as somebody writing Java or C++. Engineering needs more than “throw code at us and we make it work”. We need insights into how our code performs and what effects it has. That’s why they all browser makers spend a lot of time building developer tools. These give us the insights we need to write performant and secure JavaScript.

Learning browser developer tools

It is a good idea as a JavaScript developer to get accustomed to browser devtools as much as you can. Every browser provides them and they differ in some regards, but they all give you a lot of insight. You can learn what’s available to you by inspecting objects the browser gives you in the console. You learn what happens when your page gets rendered and where the bottlenecks are. You can inspect, edit and test what the visual output of your code is and see the CSS browsers generate from it.

Moving from console.log() to breakpoint debugging

One thing that helped me a lot is moving away from a console.log() mentality to using breakpoints. Instead of having to ask for each of the things you want to know about you get so much more. Breakpoint debugging has the benefit that the JavaScript engine stops. You can then get a snapshot of what’s happening in the browser. You don’t only get the value of, for example, a variable, but you can also see the effects this change has. And you have a direct way to step through debugging your code without having to change it. One breakpoint is often worth dozens of logging requests.

It is great that we can now debug our JavaScript, but wouldn’t it be better if we didn’t make mistakes in the first place? We’re human, we get tired, we get bored, we get sloppy. That’s why it is good when we get reminders that we’re doing something wrong whilst we’re doing it. In Word processing this is the spelling and grammar check that adds squiggly red lines were we went wrong.

Linting – prevent bugs by not writing wrong code

In development, this is linting. Linters are software that analyses your code while you write it. It executes the partial code in the background and tells you when something is wrong. In the past you needed to install and configure linters yourself. These days, many come as extensions to editors with preconfigured rules. Rules that are the result of years of experts arguing and finding a consensus what makes sense. Google’s developer tools in Chrome give you similar insights. Reading through the results of linters is a good learning exercise. They not only tell you that something is wrong, but also why, what the effects are and how to fix it.

Finding an editor that makes you more effective

This is also where a good editor is a life-saver. As said before, you can use whatever you want to write your JavaScript. For me, I am amazed how much work I get done using Visual Studio Code. This editor is open source and comes with hundreds of extensions to help you with your tasks. It has linting built-in and allows for setting breakpoints in the editor itself. It contains a command line interface to do more hard-core tasks and has Git version control built in. There is something great about not having to jump from editor to browser to terminal. Of course, sooner or later you’ll have to master all three, but why not start with a shortcut?

And guess what this editor is written in? Yes, JavaScript.

When using JavaScript you will find holy wars and ideas of what editor in what configuration is the best. As there are dozens of options in hundreds of variations, you’re welcome to go down that rabbit hole. I move every few years from one environment to another and right now, Visual Studio Code makes me happy. And many others agree. It gives you a jumpstart by providing integration with build processes out of the box. It is cross-platform and lightweight compared to other IDEs with similar feature sets. And you can hack on it and extend it using JavaScript.

Publication and build processes

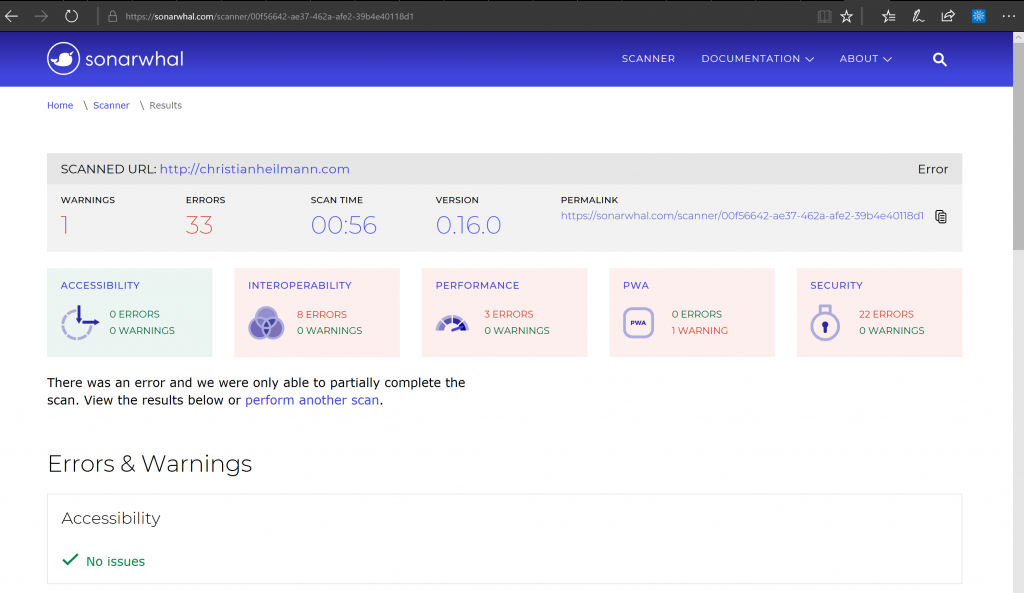

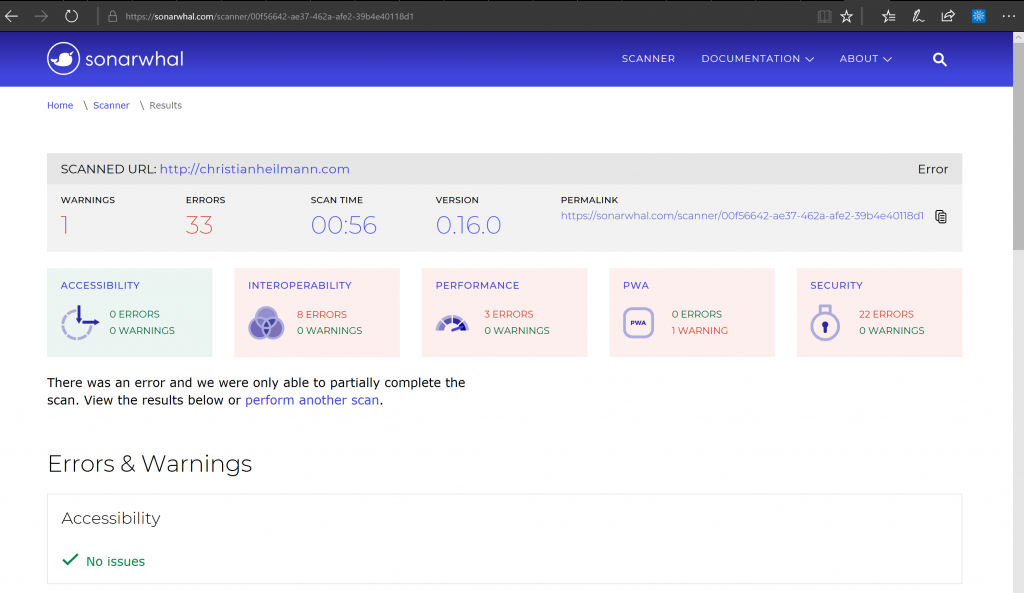

That said, editors are not the only place where linting can help. It can also be a part of a publication process. Sonar, for example is a tool that requires a URL and than tells you all the things that need improvement. This involves performance, compatibility, security and many more. A service like Sonar can act as a gate-keeper in your release process. A bug found by you is better than one reported by your users. Or, even worse, one that prevents people from using your work without you ever hearing about their problems.

Release processes are a huge part of what we follow in JavaScript these days. So it is a good idea to have it in the back of your head to get accustomed to those. A common piece of advice is to start your own project and follow best practices as you can’t break others that way. But I am not sure how helpful this is and found it rather dry and frustrating.

Should creating your first project include configuring your own server?

There is no doubt that you learn a lot by starting your own projects and running them in a professional manner. But you don’t need to start that way. I’d argue it makes more sense to start smaller. JSBin, CodePen, Glitch and other hosting communities are there for you to play and learn. They allow you to use preprocessors, frameworks and package managers to build something. You don’t get stuck setting them up and configuring your own computer over and over again. You can see if they work for you without the overhead of having to learn and un-learn something. In a job, as part of a team, it is unlikely that as a junior developer you’d be setting up the environments. So keep that for when it is necessary.

Learning materials are free and in abundance. But what’s good and what’s spam?

Talking about learning, we live in amazing times. There are almost weekly conferences about JavaScript. There are free to attend meetups. There are Slack communities, mailing lists and free online resources. Many books on the subject are free to read online. And almost all the content you pay a lot of money for to see live ends up on YouTube to watch within hours. Frankly, we have so much content that triaging it becomes a necessity. So instead of trying to follow it all, find people you trust who do a good job collecting and commenting. Then take your time to digest what is out there in your own pace. I watch talks in the gym on my phone – the average JavaScript talk burns 500 calories on a cross-trainer.

Contributing to projects and dealing with other people

Instead of starting by owning of a product, it makes much more sense to take part in existing ones. This is what JavaScript is a about these days. We’re not dealing with a language, but we are dealing with a community of developers. Many open source projects have people to help you get involved. You can get started by helping to improve documentation. You can fix simple problems the maintainers are too busy to look at as they got bigger fish to fry. And you can learn a lot by lurking and seeing what people do and how they work with each other. You also learn what to avoid and what kind of developer not to become.

As it can get messy, I’m not beating around the bush there. The success of JavaScript and the general hype around software engineering has an ugly side to it. People get into unhealthy competition and see overworking themselves as a badge of honour. It doesn’t help that many community tools value “fast an often” as a way of contribution over “good and well explained”. You will encounter terrible communication and open hostility to change and discussion.

You’re new here. Be that new person and a better one than those who annoy you

And here is my plea to you. Don’t become that person. Don’t feed the ego of those valuing telling others that they are wrong over working together. Stay calm, be kind and don’t let blanket statements and open dismissal of ideas stop you. There is a lot to choose from. No need to fill your life with more drama, when all you want is to make your mark as a creator.

We have a beautiful technology stack here and if I learned anything that change is the only constant. And today, here and now, I hope that you will be part of a change that we need.

Your happiness is more important than getting into fights over syntax, process or what framework to use. Happy developers build better products. The more diverse we become, the more we can reflect those who use our products.

And with that, I am looking forward to seeing what you do next. Thank you for going on this journey. It did me good, I hope it also will work for you.