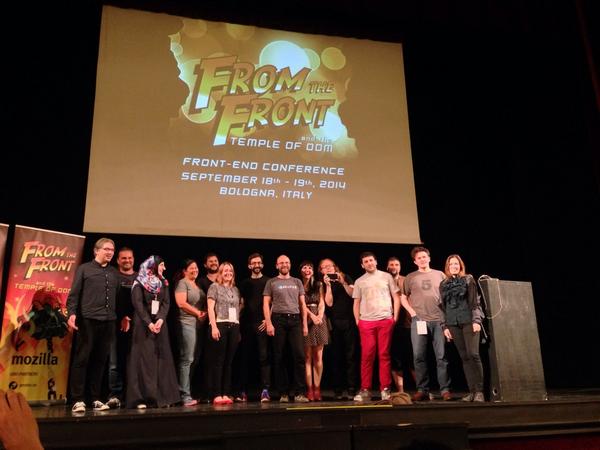

Notes on my closing keynote of From the Front 2014

Monday, September 22nd, 2014 at 3:32 pmThese are some notes about my closing keynote at From the Front in Bologna, Italy last week. The overall theme of the event was “Temple of the DOM” thus I kept it Indiana Jones themed (one could say shoehorned, but I wasn’t alone with this).

The slides are available on Slideshare.

In Indiana Jones and the Temple of Doom the Sankara Stones are very powerful stones that can bring prosperity or destroy people, depending how they are used. When you bring the stones together they light up and in general all is very mystic and amazing. It gives the movie an adventure angle that can not explained and allows us to suspend our disbelief and see Indy as being capable of more than a normal human being.

A tangent: Blowing people’s mind is pretty easy. All you need to do is take a known concept and then make an assumption from it. For example, when you see Luigi from Super Mario Brothers and immediately recognise him, there is quite a large chance you have an older sibling. You were always the one who had to wait till your sibling failed in the game so it was your turn to play with “green Mario”. Also, if Luigi and Mario are the Mario brothers then Mario’s name is Mario Mario. Ponder this.

The holy trinity of web development

On the web we also have magical stones that we can put together and create good or evil. These are the standardised technologies of the web: HTML, CSS and JavaScript. These are available in every browser, understood by them without any extra compilation step, very well documented and easy to learn (but harder to master).

Back in 1999, Jeffrey Zeldman taught us all not to write tag-soup any longer and use the technologies of the web to build intelligent solutions that use them to their strengths. These are commonly referred to as the separation of concerns:

- Structure (HTML and added extra-value semantics like Microformats)

- Presentation (CSS, Images)

- Behaviour (JavaScript)

Back then this was a very necessary stake in the ground, explaining that web development is not a random WYSIWYG result but something with a lot of planning and organisation behind it. The separation of concerns played all the different technologies to their strengths and also meant that one or two can fail and nothing will go wrong.

This also paved the way for the progressive enhancement idea that all you really need is a proper HTML document and the rest gets added when and if needed or – in the case of JavaScript – once it has been tested to be available and applicable.

The problems started when people with different agendas skewed the concept of the separation of concerns:

- HTML and semantic markup enthusiasts advocated far too loudly for very clean markup, validation and adding things like Microformats. For engineers just trying to get something to show up in a browser this has always been a confusion as the tangible benefits of this are, well, not tangible. Browsers are very forgiving and will fix HTML for you and when there is no interface in browsers that surfaces the data in Microformats, why do it? Of course, I disagree and stated very often that semantic, clean markup is the good grammar of the web – you don’t need it, but it does make you much easier to understand and shows that you learned what you are doing. But that doesn’t really matter. Fact is that we continuously try to make people understand something we hold dear without giving them tangible benefits.

- JavaScript enthusiasts, on the other hand, create far too much with JavaScript. This is a matter of control. You know JavaScript, you are happy seeing parts of an app or a page as objects and you want to instantiate them, inherit from them and re-use them. You don’t want to write much code but feel that generating it is the most clever way of using technology. Many JS enthusiasts also keep citing that browser differences are a real issue and that in JS they have the chance to test and fix problems browsers have. The fallacy here, of course, is that by doing that they also made the current and future browser issues their own.

- CSS enthusiasts started to shoot against JavaScript as a tool when CSS became more powerful. Are animations and transitions behaviour or presentation? Should it be done in CSS or in JS where there is much more granular control? What about generated content? Where does this fall into? We can create whole drawings from one DIV element, but should we?

All of this, together with lots and lots of libraries promising us to solve all kind of cross-browser issues lead to the massively obese web we see today. An average web site size of almost 2MB would have blown our minds in the past, but these days it seems the right thing to do if you want to be professional and use the tools professionals use. Starting a vanilla HTML file feels like a hack – using a build script to start a boiler plate seems to be the intelligent, full stack development thing to do.

Best practice reminders, repeated

This is nothing new, of course.

Back in 2004, I wrote a self training course on Unobtrusive JavaScript trying to make people understand the need for separation of behaviour and look and feel. In 2005 I questioned the validity of three layers of separation as I worked on CMS and framework driven web products where I did not have full control over the markup but had to deal with the result of .NET 1.0 renderers.

Web technologies have always been a struggle for people to grasp and understand. JavaScript is very powerful whilst being a very loosely architected language compared to C or Java. The ability to use inline styling and scripting always tempted people to write everything in one document rather than separating it out into several which allows for caching and re-use. That way we always created bloated, hard to maintain documents or over-used scripts and style sheets we don’t control and understand.

It seems the epic struggle about what technology to use for what is far from over and we still argue until we are blue in the face if an animation should be done in CSS or in JavaScript and whether static HTML or deferred loading and creation of markup using template engines is the fastest way to go.

So what can we do to stop this endless debate?

The web has moved on a lot since Zeldman laid down the law and I think it is time to move on with it. We have to understand that not everything is readily enhanceable. We also have standard definitions that just seem odd and could have very much been better with our input. But we, the people who know and love the web, were too busy fighting smaller fights and complaining about things we should have taken for granted a while ago:

- There will always be marketing materials or commercial training programs that get everything wrong we stand for. Mentioning them or trying to debunk them will just get more people to look at them. Yes, I do consider W3Schools part of this. We make these obsolete and unnecessary by creating better resources, not by telling people about their dangers.

- Browsers will always get things wrong and no, there will not be an amazing future where all browsers are ever-green and users upgrade all the time.

- Materials by standards bodies like this “Standards for Web Applications on Mobile: current state and roadmap” will always be verbose and seem academic in their wording. That’s what a standard is. There can not be wiggle room that’s why it sounds far more complex than we think it is.

- There will always be people who use a certain technology for things we consider inappropriate. A great example I saw lately was a Mandelbrot fractal renderer creating a span for each pixel written in SASS and needing 5 minutes to compile.

A fault tolerant web? Think again

One of the great things of old about the web was that it was fault tolerant. Meaning that if something breaks, you can provide a fallback or the browser ignores it. There were no broken interfaces.

This changed when multimedia became a larger part of HTML5. Of course, you can use a fallback image for a CANVAS element (and you should as these get shown as thumbnails on Facebook for example) but it isn’t the same thing as you don’t add a CANVAS for the fun of it but as an interactive part of the page.

The plain fallback case does not quite cut it any longer.

Take a simple example of an image in the page:

<img src="meh.jpg" alt="cute kitten photo"> |

This is cool. If the image can not be loaded or rendered, the browser shows the alternative text provided in the alt attribute (no, it is not a tag). In most browsers these days, this is just a text display. You even have full control in JavaScript knowing if the image wasn’t loaded and you could provide a different fallback:

var img = document.querySelector('img'); img.addEventListener('error', function(ev) { if (this.naturalWidth === 0 && this.naturalHeight === 0) { console.log('Image ' + this.src + ' not loaded'); } }, false); |

With video, it is slightly different. Take the following example:

<video controls> <source src="dynamicsearch.mp4" type="video/mp4"> </source> <a href="dynamicsearch.mp4"> <img src="dynamicsearch.jpg" alt="Dynamic app search in Firefox OS"> </a> <p>Click image to play a video demo of dynamic app search</p> </video> |

If the browser is not capable of supporting HTML5 video, we get a fallback image (again, great for indexing by Facebook and others). However, these browsers are not that likely to be in use any longer. The more interesting question is what happens when the browser can not play the video because the video codec is not supported? What end users get now is a grey box with the grace of a Java Applet that failed to load.

How to find out that the video playback failed? You’d expect an error handler on the video would do it, right? Well, not according to the specs which ask for an error handler on the last source element in the video element. That means that if you want to have the alternative content in the video element to show up when the video can not be played you need the following code:

var v = document.querySelector('video'), sources = v.querySelectorAll('source'), lastsource = sources[sources.length-1]; lastsource.addEventListener('error', function(ev) { var d = document.createElement('div'); d.innerHTML = v.innerHTML; v.parentNode.replaceChild(d, v); }, false); |

Codec detection is incredibly flaky and hard as it is on OS level of the hardware and not fully in the control of the browser. That’s probably also the reason why the return value of the canPlayType() method of a video element (which is meant to tell you if a video format is supported) returns “maybe”, “probably” or an empty string. A coy method, that one.

It is the web, deal with it!

We could get very annoyed with this, or we can just deal with it. In my 18 years of web development I learned to take things like that in stride and I am actually happy about the quirky issues of the web. It makes it a constantly changing and interesting environment to be in.

I really think Mattias Petter Johansson of Spotify nailed it when he answered on Quora to someone why JavaScript is the only language in a browser:

Hating JavaScript is like hating the Internet.

The Internet is a cobweb of different technologies cobbled together with duct tape, string and chewing gum. It’s not elegantly designed in any way, because it’s more of a growing organism than it is a machine constructed with intent.

This is also why we should stop trying to make people love the web no matter what and force our ideas down their throats.

Longevity? Meh!

One of the main things we keep harping on about is the lovely longevity of the web. Whether it is Microsoft’s first web page still working in browsers now after 20 years or the web being the only platform with backwards compatibility and forward enhancement – we love to point out that we are in for the long game.

Sadly, this argument means nothing to developers who currently work in the mobile app space where being first out of the door is the most important part and people know that two months down the line nobody is going to be excited about your game any more. This is not sustainable, and reminds me of other fast-moving technologies that came and went. So let’s not waste our time trying to convince people who already subscribed to an idea of creating consumable software with a very short shelf-life.

I put it this way:

If you enable people world-wide to get a good experience and solve a problem they have, I like it. The technology you use is not the important part. How much you lock them in is. Don’t lock people in.

Let’s analyse our own behaviour

A lot of the bloat and repetitiveness of the web seems to me to stem from three mistakes we make:

- we optimise prematurely

- we tend to strive for generic solutions for very specific problems.

- we build stop-gap solutions to use upcoming technology before it is ready and become dependent on those

A glimpse at the state of the componentised web seems to validate this. Web Components are amazingly necessary for the future of apps on the web platform, but they aren’t ready yet. Many of these frameworks give me great solutions right now and the effort I have to put in to learn them will make it hard for me to ever switch away from them. We’ve been there before: just try to find a junior JavaScript developer that knows the DOM instead of using jQuery for everything.

The cool new thing now are static HTML pages as they run fast, don’t take many resources and are very portable. Except that we already have 298 different generators to choose from if we want to create them. Or, we could write static HTML if all we have is a few sites. But where’s the fun in that?

Fredrik Noren had a great article about this lately called On Generalisation and put it quite succinctly:

Generalization is, in fact, prediction. We look at some things we have and we predict that any current and following entities in that group will look and behave sufficiently similar in the future to what we have now. We predict that we can write something that will cater to all, or most, of its future needs. And that makes perfect sense, if you just disregard one simple fact of life: humans are awful at predicting the future!

So let’s stop trying to build for an assumed problematic future that probably will never come and instead be thankful for what we have right now.

Such amazing times we live in

If you play with the web these days and you leave your “everything is broken, I must fix it!” hat off, it is amazing how much fun you can have. The other day I wrote Makethumbnails.com – a quick app that allows you to drag and drop images into your browser and get a zip of thumbnails back. All without a server in between, all working offline and written on a plane without a web connection using only the developer tools built into the browser these days.

We have an amazing amount of new events, sensors and data to play with. For example, reading out the ambient light around a laptop is a simple event handler:

window.addEventListener('devicelight', function(e) { var lv = e.value; // lv is the light in lux }); |

You can use this to switch from a dark on light to a light on dark display. Or you could detect a 0 and know that the end user is currently covering their camera with their hands and provide a very simple hand gesture interface that way. This sensor is always on and you don’t need to have the camera enabled. How cool is that?

Are there other sensors or features in devices you’d like to have? Please ask on the feedback form about Open Web Apps and you can be part of the next iteration of web interaction.

Developer tools across browsers moved on beyond the view-source functionality and all of them now offer timelines, step-by-step debugging, network information and even device or screen emulation and visual editors for colours and animations. Most also offer some sort of in-built editor and remote debugging of devices. If you miss something there, here is another channel to tell the makers about that.

It is a big, fragmented world out there

The next big boom of the web is not in the Western world and on laptops and desktops that are connected with massively fast lines. We live in a mobile world and the predictability of what device our end users will have is gone. Surveys in Android usage showed 18,796 different devices in use already and both Mozilla’s and Google’s reach into emerging markets with under $100 devices means the light-weight web is going to be a massive thing for all of us. This is why we need to re-think our ways.

First of all, offline first should be our mantra. There is no steady connection we can rely on. Alex Feyerke has a great talk about this.

Secondly, we need to ensure that our solutions run smoothly on very low end devices. For this, there are a few tricks and developer tools give us great insight into where we waste memory and framerate. Angelina Fabbro has a great talk about that.

In general, the web is and stays an amazingly useful resource now more than ever. Tools like Github, JSFiddle, JSBin, CodePen and many others allow us to build things together and be in constant communication. Together.js (built into JSFiddle as the ‘collaboration’ button) allows us to code together with a text or voice chat and seeing each other’s cursors. This is an incredible opportunity to make coding more human and help another whilst we develop instead of telling each other how we should develop.

Let’s use the web to work on things together. Don’t wait to build the perfect solution. Share it early, take on advice and pull requests and together we can build much better products.